The Evolution of Artificial Intelligence in AI

Introduction

Artificial Intelligence (AI), an interdisciplinary branch of computer science, has emerged as an integral part of modern society. The past few decades have seen tremendous progress in AI, fueled by advancements in computational technology and a better understanding of human intelligence.

Inception (1950s – 1970s)

AI’s roots trace back to 1950 when Alan Turing proposed the idea of “machines that think.” Turing’s seminal paper “Computing Machinery and Intelligence” questioned the ability of machines to simulate human intelligence, leading to the famous Turing Test.

1956 the Dartmouth Conference officially birthed the AI field, coining the term “Artificial Intelligence.” Early AI research thrived over the next two decades, with programs designed to mimic human problem-solving and learning abilities. ELIZA, the natural language processing computer program created by Joseph Weizenbaum at MIT in 1966, was a key achievement.

Another significant development was Shakey the Robot, developed by SRI International in 1969, the first general-purpose mobile robot able to reason about its actions.

AI Winter and Revival (1980s – 1990s)

The late 1970s and 1980s were marked by dwindling interest and funding, known as the “AI Winter.” The overpromised potential of AI resulted in disillusionment as the technology fell short of expectations. However, the creation of expert systems—programs designed to solve complex problems in specific domains—revived the AI sector during this period.

The 1990s marked a shift from knowledge-driven approaches to data-driven ones. Innovations during this time included IBM’s Deep Blue, which famously beat the world chess champion Garry Kasparov in 1997. This was the first instance of an AI system defeating a reigning world champion in any game.

Emergence of Modern AI (2000s – Present)

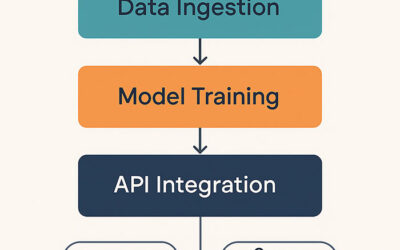

The rise of the internet and big data in the 2000s marked a crucial point in AI development. Machine Learning, a subset of AI that employs statistical techniques to enable machine improvement with experience, became paramount.

In 2012, a breakthrough came with AlexNet, a deep learning algorithm, winning the ImageNet Large Scale Visual Recognition Challenge, significantly outperforming previous machine learning methods. This win catalyzed the field of deep learning, leading to notable applications in computer vision, speech recognition, and natural language processing.

In 2014, ChatGPT, a conversational AI model developed by OpenAI, marked a significant advancement in natural language processing. Subsequently, its iterations, such as GPT-3, were designed to understand and generate human-like text, answering questions, writing essays, and even creating poetry.

Perhaps one of the most publicized events was in 2016 when Google’s AlphaGo defeated the world champion in the game of Go, an achievement previously thought to be at least a decade away due to the game’s complexity.

Conclusion

As of 2023, AI continues to revolutionize various sectors, from healthcare to finance to transportation. Today, according to Gartner, 37% of organizations have implemented AI in some form, a 270% increase over the past four years.

However, AI’s journey is far from over. Future AI research aims to tackle more complex problems, improve efficiency, and provide more personalized experiences. It also seeks to address ethical considerations, a crucial aspect often overlooked in the race for advancement.

The history of AI is a testament to human ingenuity and the quest to understand and replicate our intelligence. From its inception to today, AI has moved from concept to reality, impacting our lives in ways previously only imagined in science fiction.